Support tables with non-lowercase names in Druid#7197

Support tables with non-lowercase names in Druid#7197dheerajkulakarni wants to merge 1 commit intotrinodb:masterfrom

Conversation

|

Hey @findepi @hashhar @Praveen2112, raised a draft PR as I need some inputs from you guys to go ahead, |

You are almost correct but looking in the wrong place. The JdbcMetadata we are fetching using |

|

Hey @hashhar, I thought TrinoDataMetaData class is kind of base for all the JdbcMetaData classes in all the drivers and was referring to it, yeah maybe I was wrong completely. If we agree that it has to return false by default, Instead of trying to set this property in test cases I will try to find why is this returning true instead of false, and maybe if possible in any way I will try to correct it. |

|

I think the driver is lying (because it uses a translation layer called Apache Calcite instead of being a true focused JDBC driver). If we know the behaviour of Druid to be one of This turned out to be a bit more complex than expected. Thanks for putting the effort into this @dheerajkulakarni. |

|

Looks like the Avatica driver has some connection properties that affect the JdbcMetadata. See https://calcite.apache.org/docs/adapter.html#jdbc-connect-string-parameters. The default behavior is inherited from Oracle (see The relevant ones to set are |

|

Thank you so much for the info @hashhar, I will go through this and will make the code changes accordingly! |

52b9f5f to

d2ec83e

Compare

|

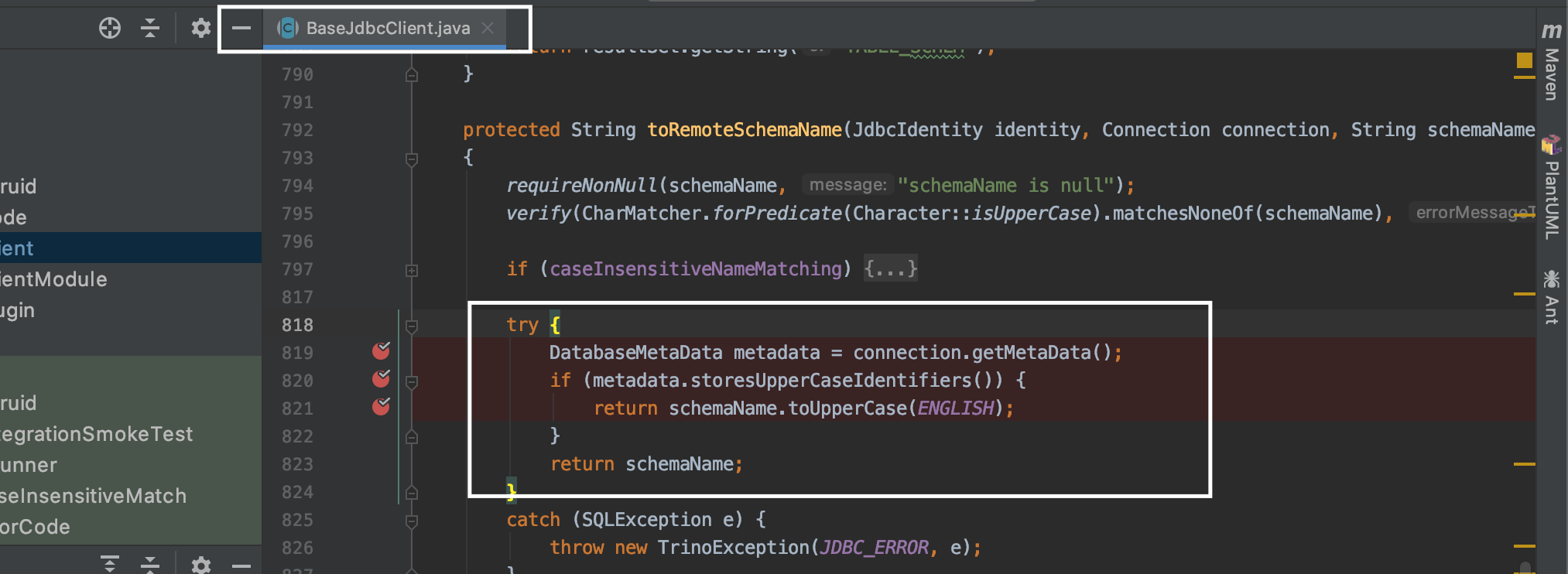

@hashhar as discussed as there is no way to set these properties at JDBC Driver level, overridden |

|

Let's also create an issue with either Druid (or more correctly Apache Calcite since they build and ship the Avatica driver) to allow making the configuration properties be passed to Calcite so that JdbcMetadata isn't lying vs actual behaviour. Thanks for the work, I will review this. |

hashhar

left a comment

hashhar

left a comment

There was a problem hiding this comment.

Some changes requested.

In particular the test for testTableNameClash seems incorrect.

There was a problem hiding this comment.

Works until it breaks.

Can we read the indexTask as a JsonNode and replace the two nodes we are interested in and return the modified JsonNode back as a string?

There was a problem hiding this comment.

I would like if the method name was more clear but can't think of anything better.

We are basically taking an existing index task, changing the destination name and ingesting under the new name. Something like a CREATE TABLE AS SELECT * FROM file.

| import static java.util.Objects.requireNonNull; | ||

|

|

||

| final class RemoteTableNameCacheKey | ||

| public final class RemoteTableNameCacheKey |

There was a problem hiding this comment.

Please create a GitHub issue here mentioning the reason why you did it this way and what needs to be fixed before we can do it the "correct" way.

Add a TODO comment here referring to that issue so that we can eventually clean up the code instead of changing API is non-needed ways.

There was a problem hiding this comment.

Same TODO over the now public constructor.

| if (tableHandles.isEmpty()) { | ||

| return Optional.empty(); | ||

| } | ||

|

|

There was a problem hiding this comment.

nit: revert whitespace changes.

| try (ResultSet resultSet = getTables(connection, Optional.of(remoteSchema), Optional.of(remoteTable))) { | ||

| List<JdbcTableHandle> tableHandles = new ArrayList<>(); | ||

| while (resultSet.next()) { | ||

| tableHandles.add(new JdbcTableHandle( |

There was a problem hiding this comment.

| tableHandles.add(new JdbcTableHandle( | |

| tableHandles.add(new JdbcTableHandle( | |

| schemaTableName, | |

| getRemoteTable(resultSet)); | |

| private static RemoteTableName getRemoteTable(ResultSet resultSet) | |

| { | |

| return new RemoteTableName( | |

| Optional.of(DRUID_CATALOG), | |

| Optional.ofNullable(resultSet.getString("TABLE_SCHEM")), | |

| resultSet.getString("TABLE_NAME")); | |

| } |

| .row("shippriority", "bigint", "", "") // Druid doesn't support int type | ||

| .row("totalprice", "double", "", "") | ||

| .build(); | ||

| MaterializedResult actualColumns = computeActual("DESCRIBE " + "CamelCase"); |

There was a problem hiding this comment.

No need for string concat here.

| .row("totalprice", "double", "", "") | ||

| .build(); | ||

| MaterializedResult actualColumns = computeActual("DESCRIBE " + "CamelCase"); | ||

| Assert.assertEquals(actualColumns, expectedColumns); |

| copyAndIngestTpchData(getQueryRunner().execute(SELECT_FROM_REGION + " LIMIT 10"), this.druidServer, "region", "camelcase"); | ||

| } | ||

| catch (AssertionError e) { | ||

| Assert.assertEquals(e.getMessage(), "Datasource camelcase not loaded expected [true] but found [false]"); |

There was a problem hiding this comment.

Can you share the entire stack trace?

This exception itself can also happen if something fails on Druid end rather than our side.

| throws IOException, InterruptedException | ||

| { | ||

| try { | ||

| //ingesting data with already existing table name in lowercase which should fail |

There was a problem hiding this comment.

I'd expect ingestion to work since Druid is case-sensitive. But querying such tables should fail from Trino.

| MaterializedResult materializedRows = computeActual("SELECT * FROM druid.druid.CAMELCASE"); | ||

| Assert.assertEquals(materializedRows.getRowCount(), 10); | ||

| MaterializedResult materializedRows1 = computeActual("SELECT * FROM druid.CamelCase"); | ||

| MaterializedResult materializedRows2 = computeActual("SELECT * FROM druid.camelcase"); |

There was a problem hiding this comment.

Can be simplified using assertQuery("SELECT COUNT(1) FROM druid.druid.camelcase", "VALUES 10")

|

👋 @dheerajkulakarni - this PR is inactive and doesn't seem to be under development. If you'd like to continue work on this at any point in the future, feel free to re-open. |

This PR addresses #6850

Changes in this PR

getTableHandle(ConnectorSession session, SchemaTableName schemaTableName)